words Al Woods

One thing is clear — the CPU won’t be the way it used to be. It isn’t going to be just better, it’s going to be different. When it comes to modern technology, time flies really fast. If you think about the central processing unit, you’ll probably imagine one of AMD or Intel’s creations.

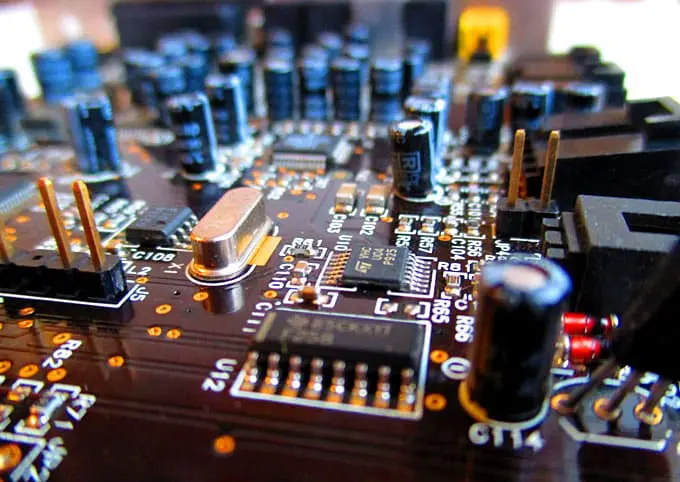

The CPU has undergone many transformations to become what it looks like today. The first major challenge it faced dates back to the early 2000s when the battle for performance was in full swing.

Back then, the main rivals were AMD and Intel. At first, the two struggled to increase clock speed. This lasted for quite a while and didn’t require much effort. However, due to the laws of physics, this rapid growth was doomed to come to an end.

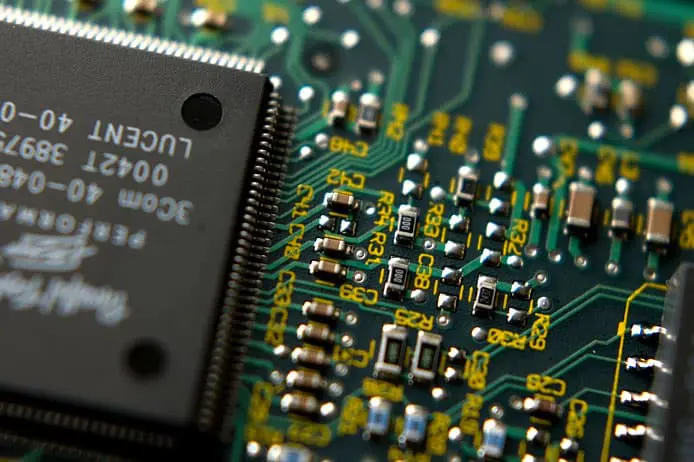

According to Moore’s Law, the number of transistors on a chip was to double every 24 months. Processors had to become smaller to accommodate more transistors. It would definitely mean better performance. However, the resultant increase in temperature would require massive cooling. Therefore, the race for speed ended up being the fight against the laws of physics.

It didn’t take long for the solution to appear. Instead of increasing clock speeds, producers introduced multiple-core chips in which each core had the same clock speed. Thanks to that, computers could be more effective in performing multiple tasks at the same time.

The strategy ultimately prevailed but it had its drawbacks, too. Introduction of multiple cores required developers to come up with different algorithms so the improvements could be noticeable. This wasn’t always easy in the gaming industry where the CPU’s performance had always been one of the most important characteristics.

Another problem is that the more cores you have, the harder it is to operate them. It is also difficult to come up with a proper code that would work well with all the cores. In fact, if it was possible to develop a 150 GHz single-core unit, it would be a perfect machine. However, silicon chips can’t be clocked up that fast due to the laws of physics.

The problem became so widely discussed that even the education sector joined in. If you have to come up with a paper related to this or a similar issue, you can turn to a custom essay service to ensure the best quality. Anyway, we will try to figure out the future of the chips ourselves.

H2: Quantum Computing

Quantum computing is based on quantum physics and the power of subatomic particles. Machines based on this technology are a lot different from the ones we have in our homes. For example, conventional computers use bits and bytes, whilst quantum machines are all about the use of qubits. Two bytes can have only one of these: 0-0, 0-1, 1-0, or 1-1. Qubits can store all of them at the same time, which allows quantum computers to process an immense amount of data simultaneously.

There is one more thing you should know about quantum electronics, namely quantum entanglement. The thing is that quantum particles exist in pairs. If one particle reacts in a particular way, the other one does the same. This property has been used by the military for some time in their attempts to replace the standard radar. One of the two particles is sent into the sky, and if it interacts with an object, its ‘ground-based’ counterpart reacts as well.

Quantum technology can also be used to process an immense amount of information. Unlike conventional computers, qubit-based ones process data thousands of times faster. Apart from that, forecasting and modeling complex scenarios are where quantum computers excel as well. They are capable of modeling various environments and outcomes, and as such can be extensively used in physics, chemistry, pharmaceutics, weather forecasting, etc.

However, there are some drawbacks, too. Such computers aren’t of much use these days and can serve only for certain purposes. This is mainly because they require special lab equipment and are too expensive to operate.

There is another issue connected with the development of the quantum computer. The top speed at which silicon chips can operate today is much lower than the one needed to test quantum technologies.

H2: Graphene Computers

Discovered in 2004, graphene gave rise to a new wave of research in electronics. This super-effective material possesses a couple of features which will allow it to become the future of computing.

Firstly, it is capable of conducting heat faster than any other conductor used in electronics, including copper. It can also carry electricity two hundred times faster than silicon.

The top clock speed silicon-based chips can work at reaches 3-4 GHz. This figure hasn’t changed since 2005 when the race for speed challenged physical properties of silicon and brought them to the limit. Since then, scientists have been looking for a solution that could allow us to overcome the maximum clock speed that silicon chips can provide. And that’s when the discovery of graphene was made.

Thanks to graphene, scientists managed to achieve a speed which was a thousand times higher than that of silicon chips. Graphene-based CPUs turned out to consume a hundred times less energy than their silicon counterparts. On top of that, they also allow for smaller size and greater functionality of the devices having them.

Today, there is no actual prototype of this computing system. It still exists only on paper. But the scientists are struggling to come up with a real model that will revolutionize the world of computing.

However, there is one drawback. Silicon serves as a good semiconductor that is able not only to carry electricity but also to retain it. Graphene, on the other hand, is a ‘superconductor’ that carries electricity at a super-high speed but cannot retain the charge.

As we all know well, the binary system requires transistors to turn on and off when we need them to. It lets the system retain a signal in order to save some data for later use. For example, it is vital for RAM chips to keep the signal. Otherwise, our programs would shut down the moment they opened.

Graphene fails to retain signals because it carries electricity so fast that there is almost no time between the ‘on’ and ‘off’ signals. It doesn’t mean that there is no place for graphene-based technologies in computing. They still can be used to deliver data at the top speed and could probably be used in chips if they are combined with another technology.

Apart from the quantum and graphene technologies, there are some other ways for the CPU to develop in the future. Nevertheless, none of them seems to be more realistic than these two.